A/B testing is at the core to the PPC industry, whether its Conversion Rate Optimisation (CRO) for landing pages, or simply testing which headline is most effective. But such testing relies on using statistical significance to help you decide whether a result is reliable, or simply down to fluke. If statistical significance is new to you, then my colleague Jonathan has a guide to show you how it underpins digital marketing. Statistical significance is a complicated topic with aspects of its methods still up for debate. However, I’m not going to look too deeply into the maths, I’ll just give you some quick tips you can implement today.

1. Use A Calculator

It may sound obvious, but it’s all too easy to think a test has produced an obvious winner when it’s really a dud. Calculators take the guess work out of testing. They ensure that your own biases don’t impact your interpretation of the test’s results. Although it may sometimes feel pointless running through your numbers when you believe you already know the answer, you’ll be surprised how often the result may not be what you expect.

Now, which calculator should you use and does it even matter? Although there are several calculators which will each try to sell you their USP, whether it’s using bayesian statistics or some special formula, is there really a huge difference to you as a tester? Personally, I’m a huge maths nerd and the methods used to get the results interest me greatly. However, when you’re just trying to work out which of your headlines performed best in a split test, there are more important things you could spend your time on than worrying about which calculator to use. Just grab one and use it. It doesn’t have to take a long time to do but it can save you lots of time later down the line because you won’t be left wondering why your test didn’t improve your results. (But if you’ve got the time, this article is awesome).

2. Optimise Low Traffic Sites

When you use a calculator, it will usually tell you how long the test will take in order to reach statistical significance. However, getting the hundreds or even thousands of conversions required for even a basic test can be very daunting for small or niche accounts. But don’t despair there are still ways you can scientifically test your results, rather than relying on your gut.

-

Make Sure Your Conversion Tracking Is Working Properly

40% of AdWords accounts don’t track conversions at all, which is a shocking figure. But even if you’re in the 60% that do track conversions, that doesn’t mean you can rest on your laurels. Half of accounts that use conversion tracking don’t have it set up properly, so they’re missing some of the key aspects of their business. Before you worry about having low conversions, make sure you are recording all the conversions you should be.

-

You Can Make More Conversions

Obviously, you can’t just create more sales, so instead you can assign conversions to other less important actions users may take on your site. There are many options but essentially you’re looking for anything that you’d like a user to do on your website. This is especially true if it carries any intent with it. Examples include:

- Using the on-site search

- Partially filling forms

- Viewing multiple product pages

- Making a phone call, if you’re not already tracking that correctly

If they’re engaging with your website, you want to know about it, so you can maximize their engagement. More data = better optimization = happier accounts. Unfortunately, there are two tricky things about using this method. First, it will make it very hard to compare your current data to your historical data because you’ll suddenly get a big boost in your conversions but not your actual sales. Although you may recognise this, scripts or automated rules won’t. Secondly, because the new conversions will have less value than actual sales, you’ll need to review your CPA targets with the new triggers in mind.

-

Don’t Use Conversions

When your ship sinks, it’s time to jump ship.

If you still don’t have enough conversions, or you just don’t like tracking non-value items as conversions, then you can optimize using a different metric. Google Analytics has a range of powerful metrics PPC accounts holders can use to measure their users’ engagement. Optimizing for Avg Session Duration has some quirks which make it controversial but we’ve found that there is generally a correlation between high converting terms and good session duration. You could therefore use this as an alternative to conversions.

If you’re doing website based optimization, a more heuristic approach might be advisable. Mouse tracking analysis is another excellent tool to really drill down into how users are interacting with your site. Finally, there really is no substitute for user observation, which can be done on any site regardless of traffic. Shanelle Mullin’s guide for optimizing low traffic sites is great if you have the time and budget to implement the ideas.

3. Keep Track Of Your Tests – Even When They’re Done

Documentation is a pain to do but it’s a necessary evil when you’re testing. If you set up a test but forget what was your control and what was your variant then you’re in trouble. It’s even necessary to keep track of the winners from your tests. False positives (tests that appear to produce a winner, but this turns out not to be the case) are more common than you might think. While you can try to mitigate against false positives, you can never completely eliminate them. Therefore, it’s important to keep track of your winners, to make sure they don’t stop producing the uplift they once did.

You can do this very easily and quickly through the criminally underutilized label system in AdWords. A simple label for test candidates and recent winners will make your organization so much easier. For even better control and documentation with little hassle, recording your tests in an excel spreadsheet can go a long way. Having all your past tests stored in one place can save a lot of time. Have we tested this idea before? Do we need to try more adventurous tests? I can see we had a big uplift at this time, what was the test? Answers to these questions can be found in AdWords change history, but are usually a pain to find. Recording your tests may not be fun but you will thank yourself for it in the future.

4. Don’t Peek At Your Tests

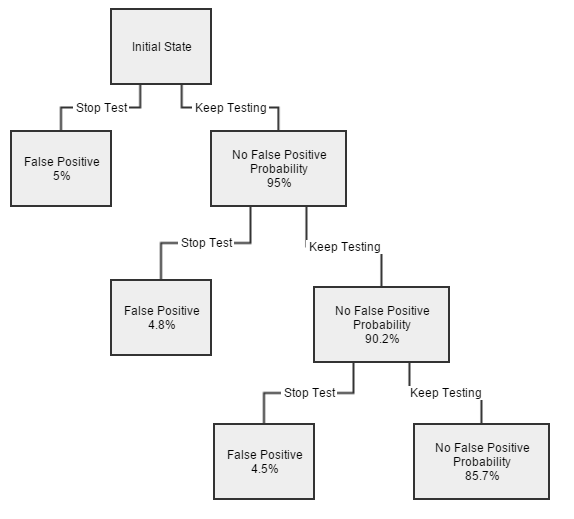

Now, if you did want to generate false positives there is no better way than to peek at your data. Always set a minimum time the test will be running for, or decide on a minimum amount of traffic you want to reach, and don’t stop your test until you reach your threshold. No matter what the circumstances! This is because if you continuously check to see if the test has become significant, it can mean false positives make up to 80% of your winning results.

Peeking at your results introduces bias. Think of it like sitting an exam where you need 75% to pass and you check your results after every question then stop the moment you hit 75%. If you got the first question right, you’d stop right away but that’s not representative of your actual skill. If you stop when it suits you, you’re much more likely to pass than if you’d taken the whole test.

As you can see in the above A/A test (where the ad competes with a copy of itself), peeking just three times when using 95% significance can bring the risk of a false positive up to near 15%! Looking early causes false positives – so don’t do it!

Conclusion

There are so many pitfalls in statistical significance, it is easy to wonder what the point is. However, it is a vital part of any test and cannot be ignored. So, use a calculator as often as you can, but it doesn’t really matter which one you use. Make sure you are still being scientific with your optimization of low traffic websites by using some nifty tricks. Always keep track of your tests both during and after the test itself and finally don’t peek at your tests before they’re ready.