However, if you search ‘SEO health check’ on Google, you’ll find that most of the results point you towards companies who provide a tool to carry it out for you, which you may have to pay for after a seven-day free trial.

It’s useful to know how to perform an SEO health check yourself as opposed to relying on other tools because not only does it save you some money, but it’s also wise to know what to look out for on your website in terms of technical and on-page SEO, as well as its content.

The deeper an understanding you have about the health of your website, the easier it will become to plan and implement things to improve its technical foundation and content, which will work wonders for your rankings and visibility.

SEO is a vast topic, so whilst this guide won’t cover absolutely everything, it will aim to cover the key areas that have big impacts on site performance or introduce you to tools that are free, yet incredibly useful and effective.

These key areas are:

- Title tags and meta descriptions

- On-page content

- Duplicate content

- Page speed

- Core Web Vitals

- Mobile friendliness

- Image size

- Broken pages

- HTTP vs. HTTPS

- Robots.txt files

If you’d like to follow along using one of your own pages, these are all the tools that you’ll need to get started:

- MozBar – chrome extension

- Screaming Frog – website crawler

- Yoast plugin – technical SEO and content optimisation WordPress plugin

- Semrush – SEO and content management and research platform

- Siteliner – find duplicate content and broken links

- SmallSEOTools – a bit of everything really!

- Google’s PageSpeed Insights or Pingdom Website Speed Test – site speed and page load times

- Google Analytics – website data and analytics

- tinypng or tinyjpg – image compression tools

- Smush – image compression WordPress plugin

- Httpstatus – bulk URL status checker

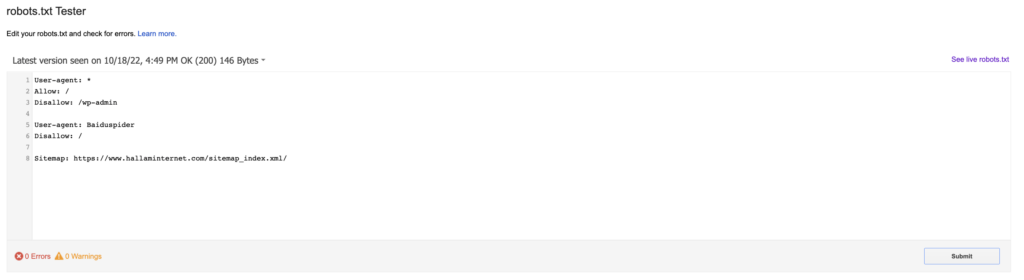

- Google’s robots.txt file tester – shows you whether your robots.txt file blocks Google web crawlers from specific URLs on your site

1. Title tags and meta descriptions

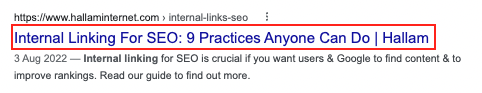

Title tags are important because they essentially tell searchers and search engines what the theme of that particular page is. This helps search engines decide how relevant a page is to a user’s search and acts as a ranking factor.

Meta descriptions, on the other hand, act as a brief summary of what that the page contains and are not a ranking factor, but they can often reinforce a decision to click on your URL. So, make sure they’re written for the user (which you should always do anyway).

A few golden rules for these:

- Avoid duplication – each title tag should be unique, just like the page should be.

It’s also worth writing unique meta descriptions for your priority SEO pages too, but Google frequently ignores your suggestions and will write its own based on what it thinks could better serve the user.

With this in mind, there are higher-priority SEO tasks than writing unique meta descriptions for every page, so don’t spend too much time on this. Just focus on creating unique title tags.

- Include your keyword – this is the page’s theme as search engines are very intuitive nowadays. No keyword stuffing please!

- Character length – don’t exceed 60 characters in your title tag and 160 in your meta description

- Include your company name – add this to the title tag using a separator like a pipe or dash, similar to Hallam’s above

- Include a CTA – put this in your meta description to encourage searchers to click through onto your website

How to check your title tags and meta descriptions

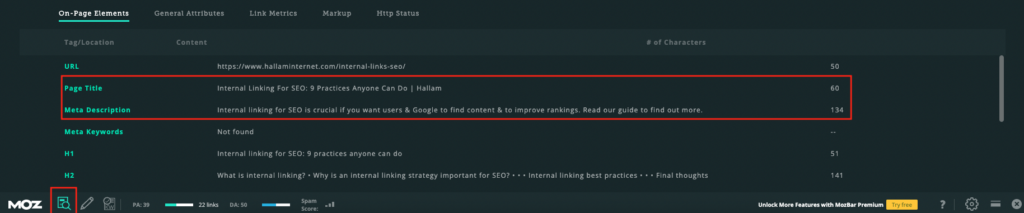

To check on your title tags and meta descriptions a page at a time, download MozBar and click on its page analysis button.

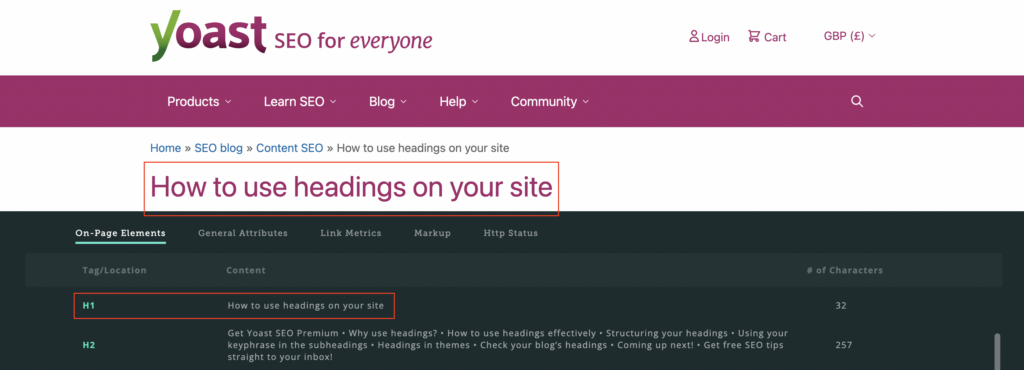

This will bring up a window that shows you information about the page like the URL, title tags, meta descriptions, headings and image alt text.

It’s handy for seeing whether any title tags or meta descriptions are missing on a particular page or if they’re over the character limit.

There are other plugins and extensions that are useful for this sort of thing, such as SEOquake, SEO Peek and Sitechecker.

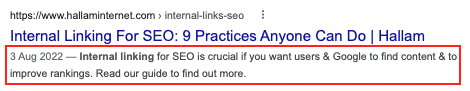

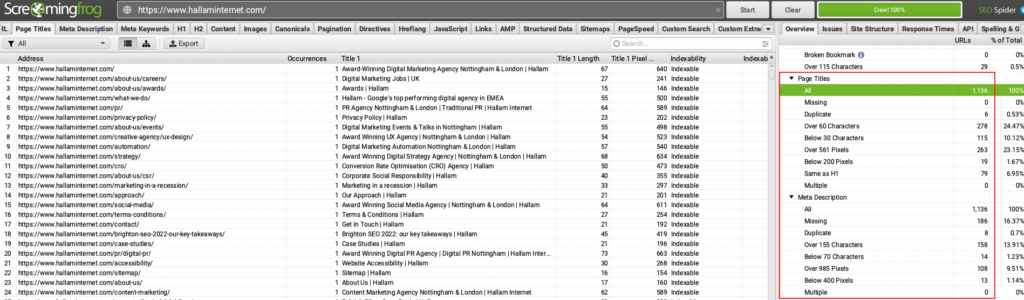

If your website has a large amount of pages, you can use the free version of Screaming Frog – a website crawler tool – to find a summary of your site’s content.

This will allow you to identify any occurrences of title tags and meta descriptions that are duplicated, too long or short and multiplied:

It can be quite time consuming to optimise your title tags, but following this guidance, should help you to see results.

2. On-page content

As part of an SEO health check, heading tags, such as your H1s, H2s and H3s, alt tags and keyword density should all be considered, but please note this shouldn’t be at the expense of how engaging and useful your content is.

There’s no point in having your content found if it’s spammy, repetitive and simply doesn’t help the user.

Here are some tips when it comes to content:

Word count

Word count isn’t actually a ranking factor, so there’s no hard and fast rule. It’s all about quality over quantity when it comes to on-page content.

If you come across a tool, such as Semrush’s SEO content template or Yoast plugin, that gives you a specific word count to meet, this will be down to how long your competitor’s pages are.

Of course, not all page lengths are the same. For example, a blog tends to be longer than a product page. We’d recommend searching your target keyword and seeing how long your competitors’ pages are. This should give you a rough idea.

The key things to remember are:

- How helpful is your content?

- Does it match your searcher’s intent?

- How well structured is your page?

- Are you using bullet points or numbered lists?

- Are you adding images, videos or infographics?

- Are you including links to other pages on your website or external websites?

If you tick the optimisation boxes, then there’s no need to worry about how long the page is.

Keyword density

Once you have decided on your target keyword, make sure you mention it naturally (no keyword stuffing again please!) throughout the page.

However, is there a perfect percentage of keywords to use that can positively affect your ranking?

No, just like word count, there’s no magic number that will achieve the best results for everyone. That’s not how Google works these days.

If there are too few keywords, search engines might find it difficult to understand what the page is about. However, if too many keywords are used, the content will look like it’s designed for SEO purposes, which Google doesn’t like.

So how do you find the balance?

It all boils down to the quality of the content and whether or not it genuinely helps the user. Ring any bells?

Read the content aloud once it’s been written and decide for yourself if it’s natural and useful. Tweak it if it sounds robotic, keyword stuffy or generally doesn’t sound right.

Whatever you do, don’t obsess over how many times your keywords are mentioned.

Headings

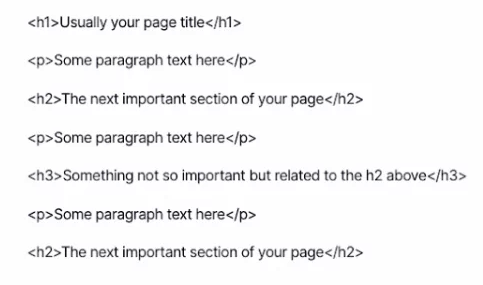

Headings are another crucial part of on-page SEO. You may have also heard them being referred to as headers, but these are the HTML elements that appear as <h1>, <h2> and <h3>.

They’re responsible for organising your content for readers so that they can find the information they’re looking for.

Headings also help search engines to identify which part of your content is most important and relevant based on search intent.

Within the H1 tag, you should include your target keyword and ensure that this is consistent with your title tag as users will be expecting the content to follow the headline they have just clicked on in the search results.

Other headings such as H2s and H3s should also be included on your pages to logically structure your content. If it feels natural to do so, you can also include supporting keywords here.

You can use MozBar to see what your existing headings are:

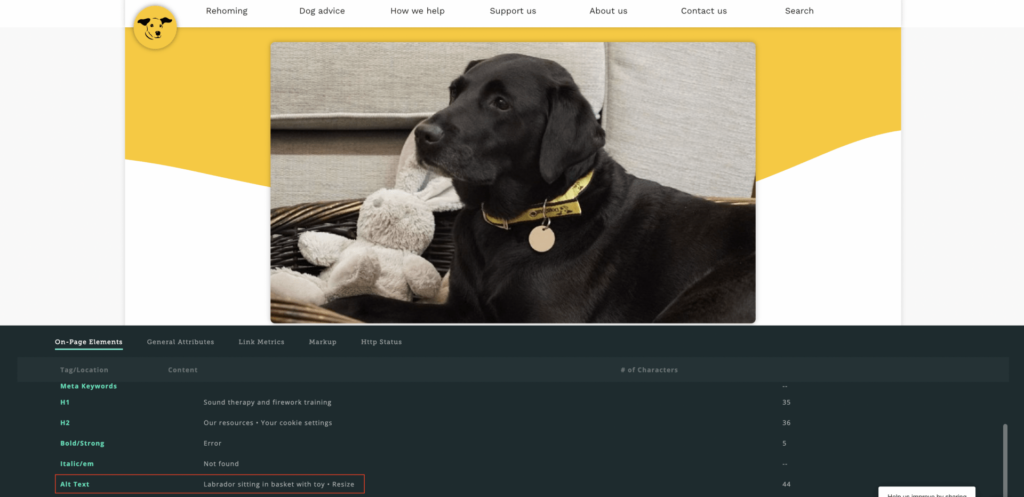

Image alt text

Many websites miss out on the opportunity rank in Google image searches by not optimising their images.

Image alt text tells search engines what your image is displaying and is used to improve accessibility for users with screen readers.

MozBar can help you see if any of your existing images have alt text:

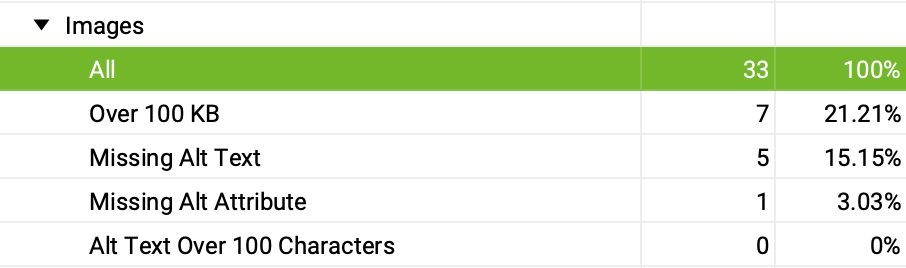

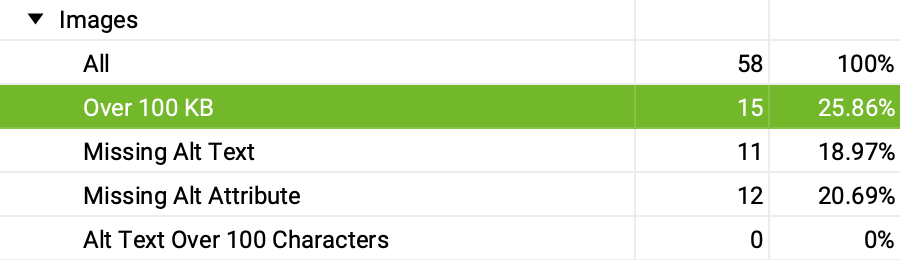

Screaming Frog can also crawl your website and tell you which images are missing alt text:

For those that are missing or need tweaking, these are our recommendations:

- Be descriptive and accurate

- Ensure it is relevant to wider page content

- Keep it short and concise – no longer than 125 characters

- Use keywords if appropriate but avoid keyword stuffing (you’re going to get sick of me saying this haha!)

- Avoid using phrases such as “an image of” as Google and screen readers will recognise images and pictures anyway

3. Duplicate content

This is when the same content appears in more than one location – that includes on your own website or someone else’s, regardless of whether you’ve copied their content or they’ve copied yours. Either way, it’s duplicate content and it could harm your rankings.

Not only is duplicate content useless and confusing for searchers, but search engines will also find it difficult to determine which page should be returned for particular search queries.

The goal of search engines is to return the best page possible for a user and their search query. Therefore, duplicate content doesn’t help with this, so search engines may even choose to exclude some pages, causing you to lose out on potential search traffic.

How to check for duplicate content

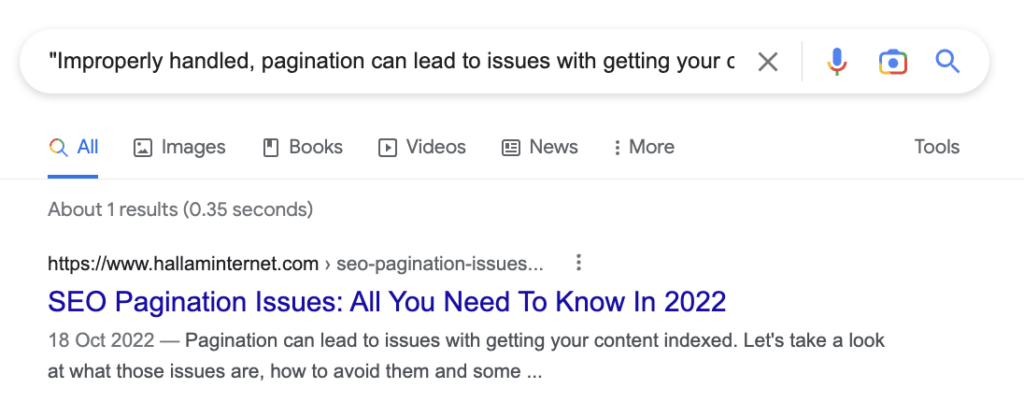

A really quick and easy way to check for duplicate content is to copy a few words from a sentence on a page and add them to a Google search using quotation marks.

Here’s an example of this using our pagination blog. By searching the first sentence Google only returned one result, which is exactly what we want:

If this was duplicate content, you would see more than one result, but it would be difficult to tell which is the original source.

However, when you’re writing content, you may accidentally make it too similar to others, especially if you’re using competitor research to gain inspiration.

There’s a couple of free tools that can help separate your content out from others:

- Siteliner – this tool lets you scan 250 pages of your website (there is a Premium option available) for not only duplicate content, but also things like broken links, page load and speed and word count.

- SmallSEOTools – they offer lots of useful tools and checkers for SEOs, but the plagiarism checker lets you check 1,000 words to see how much of the content is plagiarised or unique.

4. Page speed

Google wants to provide users with content that provides relevant answers quickly. That means speed. We’ve all clicked on websites that take an age to load and more often than not, we leave the page to go elsewhere.

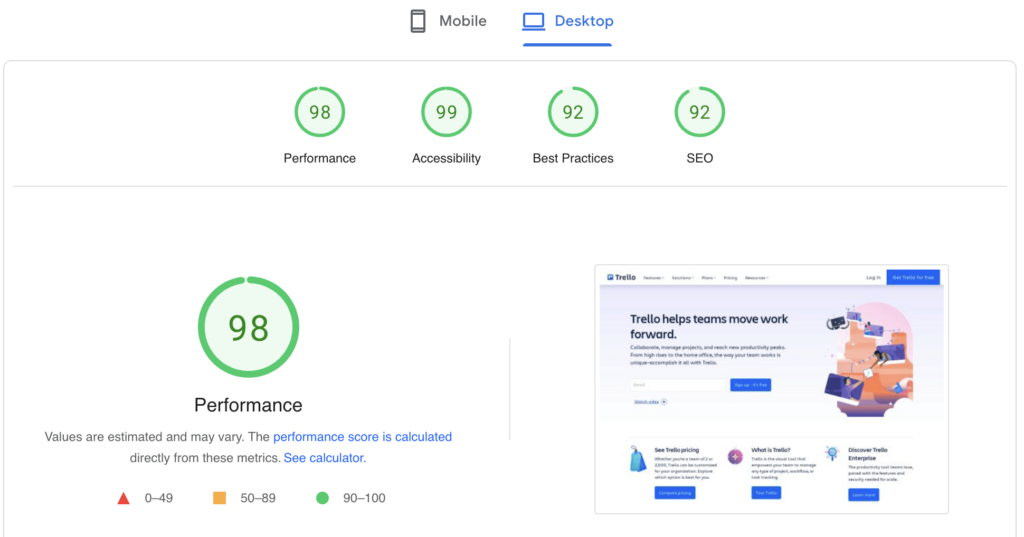

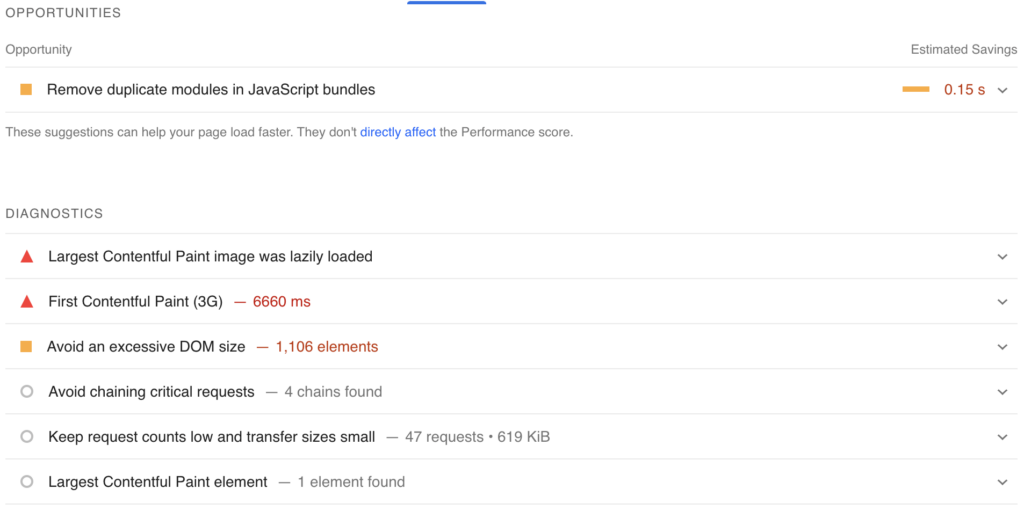

There are loads of free tools for checking the speed of your site but we’d recommend Google’s PageSpeed Insights or Pingdom Website Speed Test, both of which will tell you a performance score for both mobile and desktop:

As well as as a list of suggestions for a developer to work on in order to improve your page load speed score:

While the tools are free to use, asking your developer to make the changes may not be, whether that’s an internal resource or an external development agency.

However, if the site speed has a really poor score, I’d suggest that your web development team should fix these speed issues. Not only because it’s in order to improve performance.

It is also within their best interest to improve a site’s performance. Not only is it their job, but you need to be giving your user the best experience possible, otherwise they’ll go elsewhere.

5. Core Web Vitals (CWV)

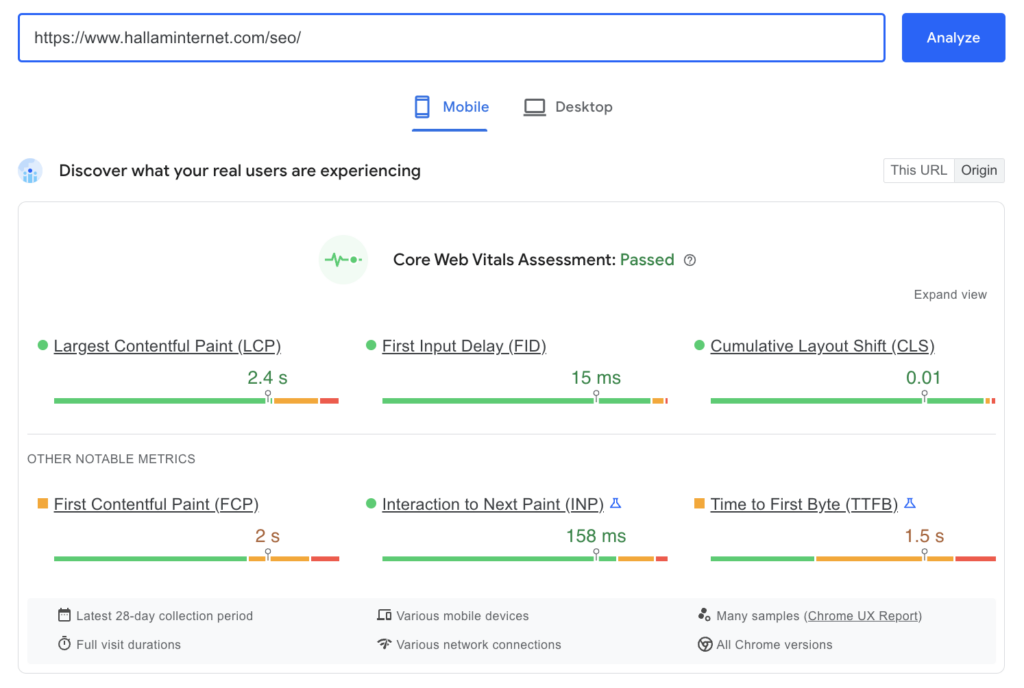

Core Web Vitals are factors that Google considers important in a webpage’s overall user experience and are made up of three specific page speed and user interaction measurements: Largest Contentful Paint (LCP), First Input Delay (FID) and Cumulative Layout Shift (CLS).

You can check whether your website has passed the Core Web Vitals audit at the same time as testing your site’s speed as it’s also on Google PageSpeed Insights:

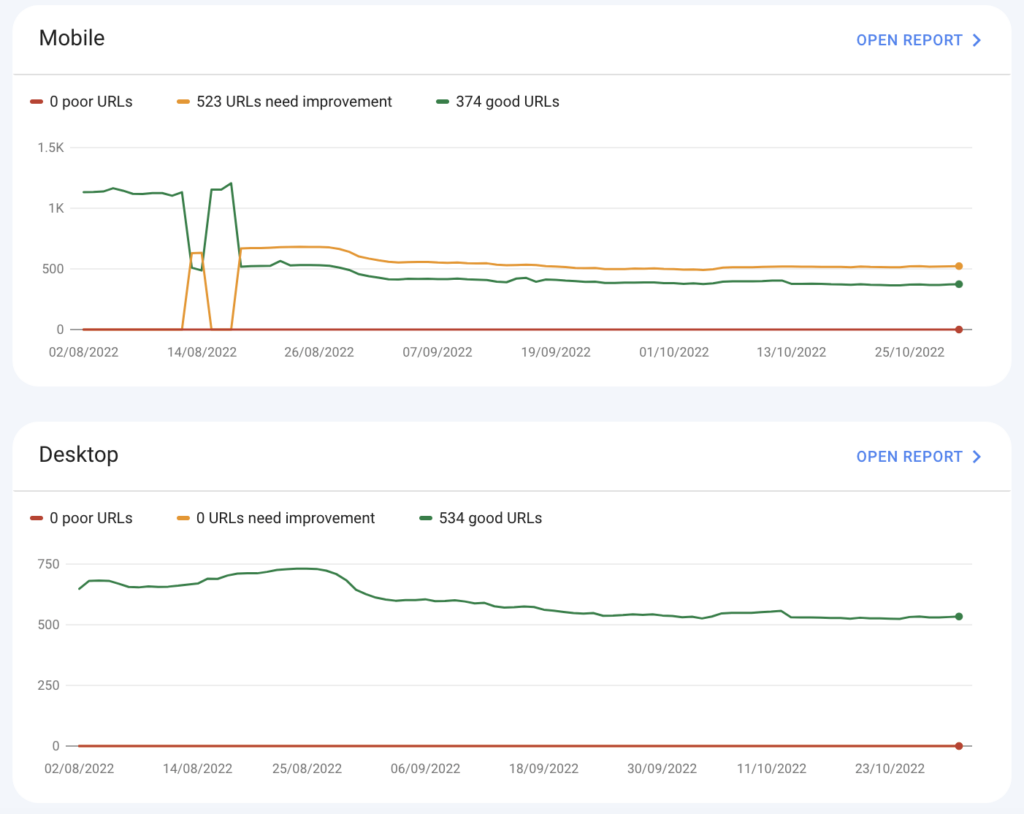

You can also find more insights on CWV and overall page experience on Google Search Console.

This part of GSC is particularly useful as it allows you to open specific reports for both mobile and desktop and see exactly which URLs need improvement.

6. Mobile-friendliness

If you compared how many times you use your phone in a day to how many times you use your desktop, you wouldn’t be surprised to learn that having a mobile-friendly website is a ranking factor.

Google wants to promote sites that cater for users on mobile devices, especially now that they have a mobile-first index.

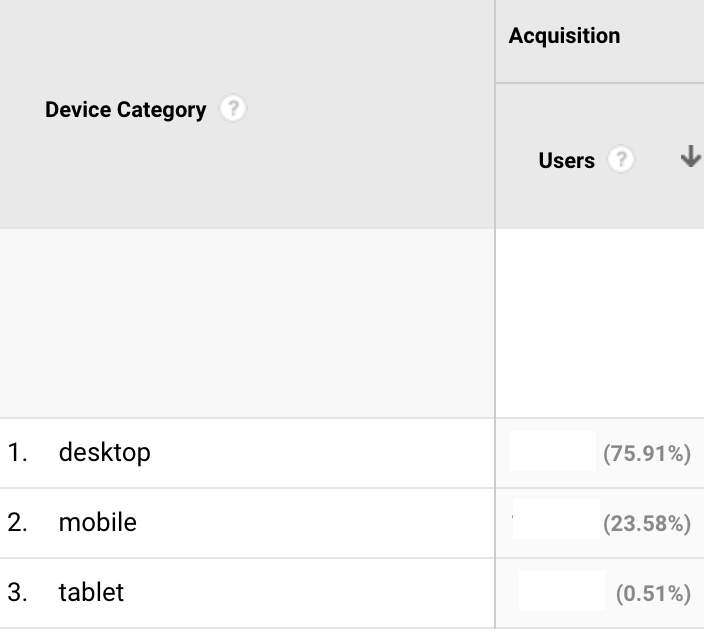

It’s always worth seeing the weighting of how many of your users use mobile, desktop or tablet and you can find this on Google Analytics.

From there, go to Audience > Mobile > Overview (for now, before we move to GA4) and select a time period to look at. For an accurate representation, it’s best to look at the past three to six months:

You can see that for this website, the majority of users are desktop, but the amount of users for mobile is still worth making sure your website is mobile-friendly.

We’d recommend making sure it is regardless of what the analytics say. It’s just interesting to know the device split. Plus, some websites, such as B2C and ecommerce sites, will naturally have more mobile users than desktop, so it’s always worth checking.

To find out whether your site is mobile-friendly in Google’s eyes, there’s a very quick and simple test you can run through Google’s own mobile friendly test:

Hopefully, it’ll say that it is mobile-friendly, but if not, it’ll come up with some observations and improvements like the text is too small to read or clickable elements are too close together.

7. Image size

There’s no excuse to have huge image files on your site because there are so many free tools that will allow you to reduce the size and compress files without affecting image quality.

It can often be hard to get the balance right though. You need a good enough quality file to be useful to the user without them having to use all their data just to see a banner image.

Improving your image sizes will also help speed up your site and improve the page experience and your Core Web Vitals.

Screaming Frog can be a handy tool here again. Once you’ve crawled your site, you can export images that are over 100KB:

Or you could export all images: filter by the biggest images first and compress them using tinypng or tinyjpg. There are also various image compression plugins for WordPress, such as Smush, who provide a free version.

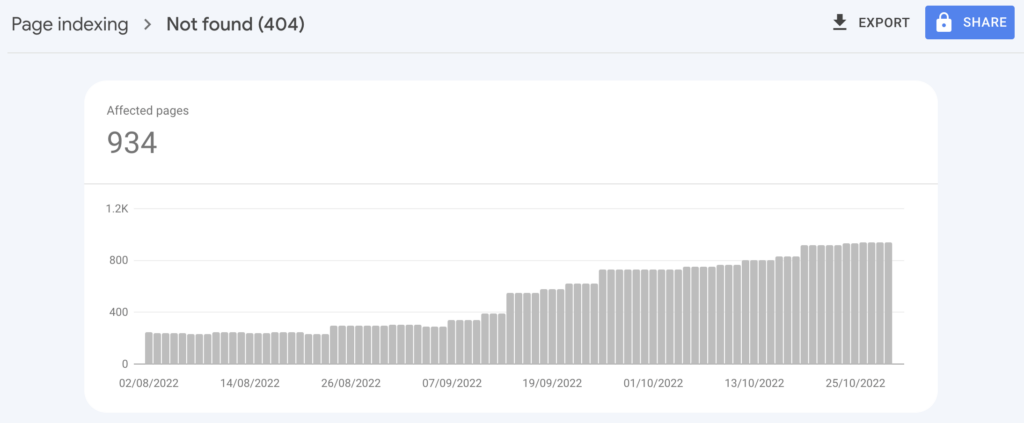

8. Broken pages

It’s important to keep tabs on pages that were once live but now are not. Broken pages (or 404 pages/errors) can occur for many reasons, such as accidental deletion or a URL change.

Although having 404 pages doesn’t in itself impact rankings, the pages could still be indexed, generate traffic and have inbound links. If you lose these pages, you’ll lose the traffic and any value the link was passing to your website, on top of giving the user a poor experience.

Therefore it’s always best practice as part of an SEO health check to review your broken pages on a regular basis and redirect them to a relevant page.

You can use Google Search Console to identify any 404 pages under the page indexing report:

However, don’t be alarmed if the number is quite large as it’s not always a bad thing to have a 404 page. Often 404 pages may be unimportant URLs, and if they’re not indexed, people won’t find them via search anyway.

To determine whether you need to redirect a 404, it’s always best to look at them on a page-by-page basis as they could all be 404ing for their own reason which may not need to be fixed.

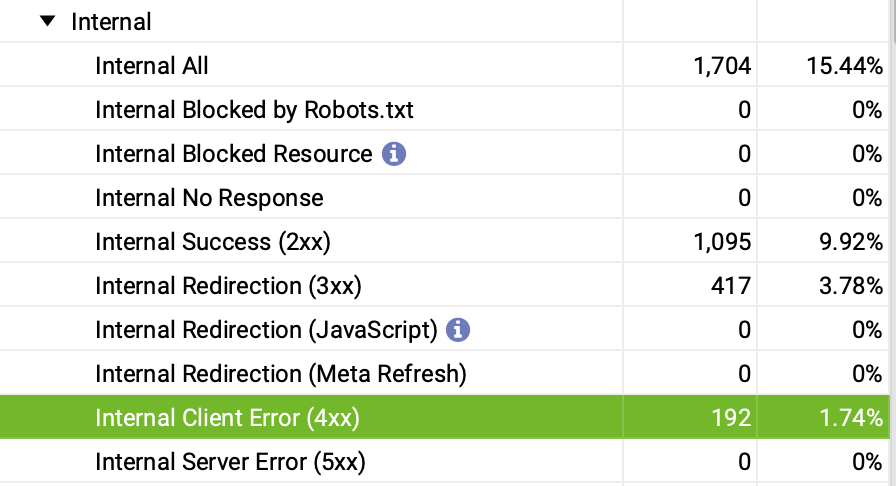

You can use Screaming Frog to check for status codes:

You can also further check these URLs by pasting them into httpstatus and, if it’s best to do so, create a spreadsheet of the URLs that are 404ing with another column for their destination pages to send to your development team for redirection.

9. HTTP vs. HTTPS

If you see an HTTPS before your domain name, that means your website uses SSL to move data. In other words, the data is encrypted and more secure than HTTP – this is extremely important for users, especially if they’re adding payment details onto your site.

As this provides more security for the user, Google is more likely to promote a site that uses HTTPS over one that uses HTTP.

If your website doesn’t use HTTPS, don’t panic. It may be that there’s no business case for your website to change over to HTTPS.

The acid test is to ask yourself: “If I was a customer coming to my website, would I want any data I entered to be encrypted and secure? And do I want them to be shown a sign that says “site not secure?”

Either way, I’d recommend making the move to HTTPS.

Google certainly seems to agree, and because of this HTTPS is an expectation of websites now. Some browsers, such as Google Chrome, even warn users if the site they are entering is not on HTTPS.

10. Robots.txt

Robots.txt is a file that acts as a map for Google and other bots. It tells Google how to crawl your website, which pages to crawl and which pages not to crawl and index, as well as the location of your sitemap.

Google ideally wants to access everything so it can choose what is and isn’t relevant, but you may still decide that certain areas of your site should be off limits to search engines, such as client login pages, account pages and thank you pages.

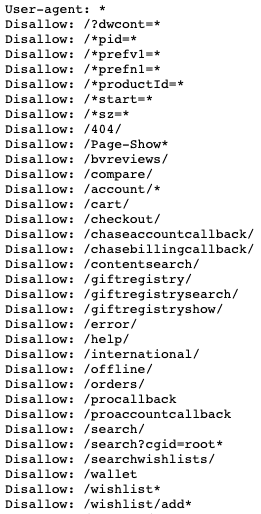

Using Patagonia’s robots.txt file (https://eu.patagonia.com/robots.txt) as an example, they don’t want robots wasting crawl budget, time and energy,crawling pages to do with wishlists, accounts, checkout, carts etc.

What you’re doing is allowing or restricting access to the pages on your website. It’s easy to accidentally write a snippet of code which tells Google not to crawl the entire site (you’d be surprised how many times we’ve seen this before).

If you have a robots.txt file it should be located on the root of your domain. For example, “www.example.com/robots.txt“

You can easily check this file by using Google’s robots.txt file tester:

This will show you if there is a robots.txt file and if there are any suggestions for improvements. If you’re in doubt as to whether a page is accessible, you’ll also be able to type in the URL to see if it’s blocked or not.

Final thoughts

Whilst the SEO tools and websites provided in this post are free, some do come with limitations, so we’d recommend trying out a few (most come with free trials) before seeing which one you prefer.

It’s worth investing in a tool that can really help you maximise your SEO efforts. A few of our favourites are Ahrefs, Semrush, ContentKing and SEOmonitor, but there’s loads more to choose from.

I hope you found this guide on how to carry out a SEO health check useful, but if you need some further advice, please don’t hesitate to get in touch with our team.